Why We Chose RAG as the Foundation of Our Enterprise AI Platform

A Founder’s Perspective

Praful Pujar

1/23/20263 min read

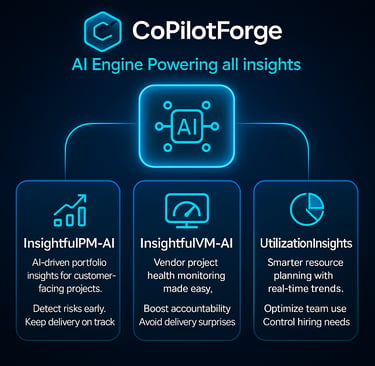

When we began building our AI-led products—InsightfulPM-AI, UtilizationInsights, and InsightfulVM-AI—we were not trying to chase the latest AI trend. We were trying to solve a very specific, persistent problem we had seen repeatedly across large transformation programs:

Decision-makers lacked timely, reliable visibility into what was actually happening.

Not because teams were incompetent. Not because vendors were incapable. But because information was fragmented, delayed, and disconnected from decision-making moments.

That reality forced us to confront a fundamental architectural question early on:

How should AI truly work inside complex enterprise environments?

The Reality Behind Most Transformation Programs

On paper, transformation programs look structured and well-governed. In practice, they are anything but simple.

· Projects are executed across multiple vendors.

· Dependencies span internal teams, suppliers, and partners.

· Budgets are committed across contracts, milestones, and phases.

· Risks surface gradually—often too late.

And critically, the information required to manage all of this rarely lives in one place.

· Project plans sit in one system.

· Vendor commitments in another.

· Dependencies in spreadsheets.

· Financials in ERP tools.

· Risks buried in decks, emails, and meeting notes.

Leaders are then expected to make confident decisions by stitching together partial views—often manually and retrospectively. Over time, we realized that this fragmentation, not execution capability, is what undermines governance.

Why “Generic AI” Breaks in Enterprise Contexts

The rise of Large Language Models (LLMs) has been extraordinary. Their ability to reason, summarize, and generate language is genuinely impressive. But in enterprise transformation environments, LLMs alone expose a critical limitation:

They don’t understand your reality.

A generic LLM can:

Summarize documents

Generate insights from patterns

Provide articulate responses

But it cannot:

Understand live project status

Reason over vendor-specific obligations

Interpret dependency ownership

Assess budget utilization or risk exposure

Without access to real, structured operational data, AI responses—no matter how polished—remain abstract. For leadership teams making high-stakes decisions, abstraction is dangerous. In governance, confidence without grounding is worse than no insight at all.

The Principle That Shaped Everything

Very early, we aligned on a core belief that became non-negotiable. AI must reason over your data—not guess.

This principle guided every design and architecture decision that followed.

We didn’t want AI that simply sounds intelligent. We wanted AI that leaders could trust, challenge, and act upon. That requirement naturally led us to Retrieval-Augmented Generation (RAG).

What RAG Enables—Beyond the Buzzword

At its core, RAG combines two capabilities:

The reasoning and language abilities of LLMs

The retrieval of relevant, enterprise-specific data at query time

In our platform, this means AI operates over:

Project and portfolio data

Vendor and supplier commitments

Dependency structures and ownership

Resource utilization metrics

Financial and budget information

This data is embedded, indexed, and retrieved as vectorized enterprise context, ensuring that every AI response is grounded in reality. When a leader asks a question, the system doesn’t speculate. It retrieves relevant facts first, then reasons over them. This distinction is subtle—but transformational.

Why This Matters at the Leadership Level

RAG changes not just how AI answers questions, but which questions leaders can safely ask. Instead of relying on static dashboards or post-facto reports, leaders can engage in a dialogue with their programs:

Where are dependencies accumulating across vendors?

Which suppliers are introducing downstream risk?

How is resource utilization impacting delivery timelines?

What budget is underutilized, over-committed, or at risk?

What actions should I take this week to prevent slippage?

These are not reporting questions. They are governance questions. RAG allows AI to answer them with context, traceability, and relevance—qualities essential for executive decision-making.

One Architectural Foundation, Multiple Domains

Another advantage of RAG is consistency at scale. We deliberately designed the same architectural foundation across:

InsightfulPM-AI (Project portfolio and program governance)

UtilizationInsights (Resource and Capacity intelligence)

InsightfulVM-AI (Multiple Vendor Performance, Multiple Vendor Dependency, and financial risk management)

Different lenses. Same intelligence backbone.

This ensures organizations don’t end up with siloed AI tools that can’t reason together. Instead, intelligence compounds over time.

AI Should Elevate Judgment—Not Replace It

We are often asked whether AI will replace PMOs, program leaders, or governance forums. Our answer has always been clear: No. AI should not replace judgment. It should strengthen it by providing Earlier signals. Clearer trade-offs. Better-informed conversations.

RAG enables this by grounding AI in facts—so leaders can debate, decide, and act with confidence.

A Closing Reflection: If you are evaluating or building enterprise AI, one question is worth asking early. Is the AI reasoning over real operational data—or just generating text? The answer will determine whether AI becomes a strategic asset—or an expensive distraction.

For us, RAG is not a feature we added later. It is the foundation of how we believe enterprise AI should work.